WARNING: USING AI FOR MEDICAL DIAGNOSES MAY BE HARMFUL TO YOUR HEALTH

Artificial intelligence (AI) is rapidly reshaping the landscape of healthcare, particularly in the realm of medical diagnosis. From analyzing medical images to predicting disease risk, AI-driven tools are increasingly being integrated into clinical workflows. While the potential benefits are substantial—improved accuracy, faster diagnoses, and expanded access to care—there are also meaningful risks that warrant careful scrutiny. Understanding both sides is essential for clinicians, policymakers, and patients alike.

The Benefits: Precision, Speed, and Scale

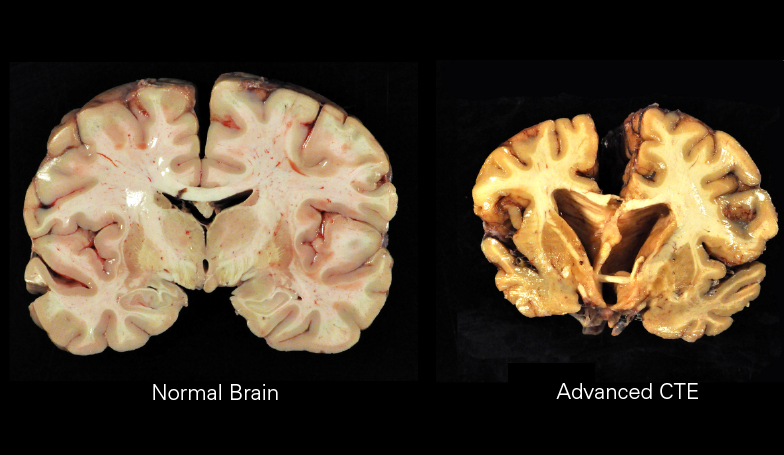

One of the most compelling advantages of AI in diagnosis is its ability to process vast datasets with remarkable speed and consistency. Machine learning models, particularly those trained on imaging data such as X-rays, MRIs, and CT scans, have demonstrated performance comparable to—or in some cases exceeding—that of human specialists. For example, AI systems can detect early signs of diseases like cancer, diabetic retinopathy, or pneumonia with high sensitivity, often identifying subtle patterns that may be missed by the human eye.

Speed is another critical benefit. Traditional diagnostic processes can be time-consuming, involving multiple tests and specialist consultations. AI can streamline this by delivering near-instantaneous analysis, which is especially valuable in time-sensitive scenarios such as stroke or sepsis. Faster diagnosis can translate directly into earlier intervention and improved patient outcomes.

AI also offers scalability. In regions with limited access to healthcare professionals, AI tools can help bridge the gap by providing preliminary diagnostic support. This democratization of expertise has the potential to reduce disparities in healthcare access, particularly in underserved or rural communities.

The Risks: Bias, Opacity, and Overreliance

Despite these advantages, AI in medical diagnosis is not without significant risks. One of the most pressing concerns is algorithmic bias. AI systems are only as good as the data they are trained on. If training datasets lack diversity—whether in terms of race, gender, age, or socioeconomic status—the resulting models may perform poorly on underrepresented populations. This can lead to misdiagnoses or unequal quality of care, exacerbating existing health disparities.

Another challenge is the “black box” nature of many AI models. Deep learning systems, in particular, often lack transparency in how they arrive at specific conclusions. This opacity can make it difficult for clinicians to trust or validate AI-generated diagnoses, especially in high-stakes situations. Without clear interpretability, accountability becomes murky—who is responsible if an AI system makes an error?

There is also the risk of overreliance. As AI tools become more integrated into clinical practice, there is a danger that healthcare providers may defer too readily to algorithmic outputs, potentially diminishing their own diagnostic skills. This is particularly concerning in cases where AI systems may produce confident but incorrect results. Maintaining a balance between human judgment and machine assistance is critical.

Regulatory and Ethical Considerations

The integration of AI into medical diagnosis raises complex regulatory and ethical questions. Ensuring the safety and efficacy of AI tools requires rigorous validation, ideally through clinical trials and real-world testing. Regulatory bodies are still evolving their frameworks to keep pace with rapid technological advancements.

Data privacy is another key issue. AI systems often rely on large volumes of patient data, raising concerns about consent, security, and potential misuse. Robust safeguards are necessary to protect sensitive health information while enabling innovation.

Ethically, there is a need to ensure that AI augments rather than replaces human care. The patient-provider relationship is built on trust, empathy, and communication—elements that AI cannot replicate. Any deployment of AI in diagnosis should preserve these human dimensions.

The Path Forward: Integration, Not Replacement

AI has the potential to be a powerful tool in medical diagnosis, but it should be viewed as a complement to—not a substitute for—clinical expertise. The most effective use cases are those where AI enhances human decision-making, providing additional data points or second opinions rather than definitive answers.

To realize this potential, stakeholders must invest in high-quality, diverse datasets; prioritize model transparency and interpretability; and establish clear regulatory standards. Ongoing education for healthcare professionals is also essential, ensuring they understand both the capabilities and limitations of AI tools.

In conclusion, AI in medical diagnosis represents a significant advancement with the capacity to improve outcomes and expand access to care. However, its deployment must be guided by rigorous standards, ethical considerations, and a commitment to equity. With thoughtful integration, AI can become a trusted ally in the pursuit of better health for all.